Another week, another 'AI browser.' The fatigue is real. It feels like everyone is just slapping a chat window on a Chromium wrapper and calling it innovation. We've seen it from Microsoft with Edge, from Perplexity with Comet, from a dozen startups you’ve already forgotten. It’s a tired playbook.

So, when OpenAI dropped "ChatGPT Atlas," the collective yawn from the developer community was audible. "Another wrapper, right?"

Wrong.

Well, technically, it is a Chromium wrapper. And it's also something MUCH scarier. I just dove deep on "OWL," OpenAI's new architecture for Atlas. And I saw demons. This was a terrifying read.This isn't another reskin. This is a "chaotic rewrite of things that shouldn't be touched".

The public sees a slightly cleaner browser. Engineers see a suicidal, brilliant, and terrifyingly complex piece of system architecture.

Let's decode this.

What Happened (The 30,000-Foot View)

OpenAI announced Atlas as a "new way to browse the web with Chat GBT by your side". The marketing claims they "reimagined the entire architecture of a browser" to deliver "instant startup responsiveness" and "a strong foundation for agentic use cases".

That last part is the key.

See, the "instant startup" claim is... debatable. One developer immediately tested it and concluded, "That was not that fast... I'm going to call [__] on that one".

So, if the 'instant startup' is marketing cover, what is the REAL goal? It’s those other two benefits: isolation and agents. The new architecture separates the main Atlas app from the Chromium runtime.This means "If [Chromium's] main thread hangs, Atlas doesn't. If it crashes, Atlas will stay up".

This isn't for you. This is for the AI. They didn't build a stable browser for you; they built a crash-proof playground for the agent.

Business/Technical Angle (The Deep-Dive)

This is where the true madness begins. The 'reimagined architecture' isn't just a marketing line. It's a fundamental, and wildly complex, decoupling of the browser's UI from its engine.

Meet OWL: The Decoupled Demon

So how does it work? Meet OWL: The OpenAI Web Layer.

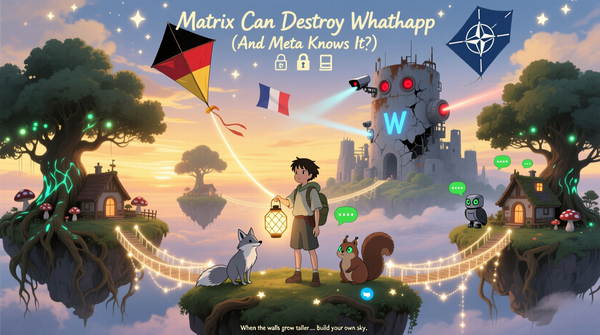

This is the core concept. OWL runs "Chromium's browser process outside of the main Atlas app process". Look at this diagram (based on Image 1). The "Atlas App" is the native client. The "Owl App" is the Chromium host. They are two separate entities.

This is NOT an Electron app. Electron bundles the UI (JavaScript) and the engine (Chromium) into one massive, monolithic process. OWL does the exact opposite: it violently separates them.

The "Atlas App" (the client) is "built almost entirely in Swifty [sic] and AppKit". It's a clean, native macOS app. The "Owl App" (the host) is the entire C++ Chromium engine, now running as an "isolated service layer".This is a client-server architecture on your own damn laptop.

Why do this? It's an organizational solution. OpenAI has a core culture: "Every new engineer merges a small change in the afternoon of their first day". But "Chromium can take hours to check out and build". You can't have both.

Unless... you decouple. With OWL, the Atlas (SwiftUI) app builds in "minutes". The C++ (Chromium) "Owl Host" is shipped "internally as a pre-built binary". 99% of their app engineers never need to touch or build Chromium. It's a brilliant, high-stakes solution to a human logistics problem.

The Mojo Bridge of Faith (and C++)

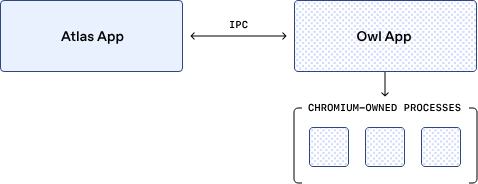

But here's the catch. If you have two separate processes, in two different languages (Swift and C++), how do they talk?

This is the lynchpin. "They communicate over IPC, specifically Mojo". Mojo is "Chromium's message passing system", a deep, internal, and complex C++ API.

And this is the magic: OpenAI "wrote custom Swift... bindings for Mojo".

Look at this diagram (based on Image 2). You have a WebView class written in Swift directly calling a WebViewHost class written in C++.

This isn't a public, stable API. This is a custom-built, hand-rolled bridge of faith. OpenAI's engineers had to dive into the C++ guts of Chromium and build an inter-language-process-communicator from scratch.

This custom bridge is the real secret sauce. And it's a permanent liability. When Google refactors Mojo (which they do), OpenAI's bridge breaks. When Apple breaks Swift's ABI, the bridge breaks. OpenAI has willingly accepted this permanent, expensive R&D cost as the price for driving Chromium like a service.

The 'Terrifying' Pixel Pipeline

Okay. So, they can send messages. But how do the pixels from the C++ process get to the Swift UI?

This is the part that is truly terrifying. They don't copy the pixels. That's slow, janky, and what a 'wrapper' would do.

No. They project the layer.

The Atlas app's NSView (the native UI element) embeds the Chromium layer using the... "private CALayerHost API".

A developer who read this reacted with pure, unadulterated terror: "Apparently they're using a private API for this. Definitely not a thing that can go wrong. Oh god. Oh god.".

He's right. Using a private Apple API is a cardinal sin. It's a deal with the devil. It's fast, it's perfectly native, and it's a guarantee that a future macOS update will break your entire application. The diagram in Image 3, showing NSView hosting a CALayerHost, is a high-wire act without a net.

This is the most arrogant, high-risk, high-performance rendering solution possible. OpenAI is betting they are either too big to fail or rich enough to fix it 24/7 when Apple inevitably breaks it.

The Event Loop from Hell

And if the rendering was scary, the input model is... [__].

Since the UI (Swift) and the engine (C++) are separate, all input has to be translated. When you click, the Swift app "translate[s] platform events like a Mac OS NSEvent... into Blink's WebInputEvent model" and sends it to Chromium.

But what if the webpage doesn't handle that click? (Like a right-click on empty space).

This is the "chaotic re-transing". The event is "returned back to the client".The Swift app then has to "re-synthesize an NSEvent"a fake, synthetic native event and "give the rest of the app a chance to handle the input".

A developer analyzing this loop just said: "Are you kidding?... they have to recreate a fake synthetic NS event... Jesus [__] Christ.".

This is the loop from hell:

- Click: (Native

NSEventin Swift) - Translate: (To

WebInputEvent) - Send: (To C++ via Mojo)

- Reject: (Chromium doesn't handle it)

- Return: (Back to Swift via Mojo)

- Re-synthesize: (A new, fake

NSEventto show a context menu)

The debugging must be a nightmare. And the security? As the developer in noted, this is a massive attack surface. What if a malicious page can trick this rejection loop?

Market Implications (Why Bother With This Madness?)

So why? Why build this Rube Goldberg machine? Why choose this "hellish", complex, and high-risk architecture?

The answer is one, single slide in their deck: Agent Mode.

This entire, terrifying stack was built to solve the "unique challenges" of "agentic browsing features". It solves two problems for the AI.

It's ALL About Agent Mode

Problem 1: Giving the Agent "Eyesight"

The AI model "expects a single image of the screen as an input". But on the web, UI elements like <select> dropdowns or color pickers render in separate windows or layers. A simple screenshot won't see them.

This is where OWL shines. Because they control the compositor, they can "composite those pop-ups back into the main page image at the corresponding and correct coordinates". The AI gets one, perfect, complete frame. This isn't DOM-parsing, like Perplexity's Comet. This is true, robust, visual understanding. If a human can see it, the AI can see it.

Problem 2: Giving the Agent "Hands" (Sandboxed)

How does the AI click and type? This is the other masterstroke. Agent-generated events are routed "directly to the renderer, never through the privileged browser layer".

This "preserves the sandbox boundary". The AI, even if it goes rogue, CANNOT synthesize "keyboard shortcuts that make the browser do things unrelated to the web content". They built a custom, sandboxed input pipe just for the AI. It's the ultimate "look but don't touch (too much)" model.

The Windows-Sized Betrayal

But wait. What about Windows?

I'll be blunt. "This is never coming to Windows. God.".

The tech stack is "almost entirely in Swifty [sic] and AppKit". That's Apple-native. That private CALayerHost API? It does not exist on Windows. OpenAI would need a total, ground-up rewrite.

We all watched The Browser Company (Arc) try to port its Swift app to Windows. It was a buggy, clunky, performance disaster.7 It was so bad they dropped SwiftUI for their next product.

OpenAI knows this. This is not an accident. They are intentionally ignoring 80% of the market.

Why? Because they aren't building a Chrome competitor. They are building a premium, pro-level tool for the high-spend, high-value, Mac-using developer and creator class. The same demographic that buys Apple hardware. This is a signal. Atlas is not a commodity; it's a precision instrument.

Punchline Close (The Final Take)

Let's be clear. Atlas is not a browser.

It is a cockpit. It is a high-performance, terrifyingly-complex, custom-built harness designed to give an AI perfect vision and sandboxed hands.

The decoupling (OWL). The custom bridge (Mojo). The private API rendering (CALayerHost). The insane event loop. It is ALL justified by the need for "Agent Mode."

OpenAI didn't build a better browser for us. They built a better body for it.

This engineering is visionary. It's also suicidal. And it's done.

OpenAI just gave its AI eyes and hands. The only question that matters now is: what will they do with them?